Coming into this earnings season, the key question was not whether AI demand was strong. That has been clear for some time. The more important question was whether the reported returns would start to reflect and justify the massive capital investments. Amazon, Meta, Google and Microsoft all reported on the same day and did not disappoint, each delivering strong AI-related revenue growth.

The clearest example was Google Cloud, which grew 63% year on year in the latest quarter, up from 48% and 36% in the two previous quarters. That is not just a high growth rate, it is an acceleration. More importantly, the hyperscalers indicated that revenue growth would have been even stronger if they had had more compute to offer.

The message is clear: They are not short of demand; they are short of compute. That distinction matters. In a typical demand-constrained business, growth is determined by customer appetite. In a supply-constrained business, growth is determined by the speed at which capacity can be added. Once that happens, the bottleneck shifts from software into the physical world: data centres, power availability, grid connections, transformers, switchgear, turbines, cooling systems and construction capacity.

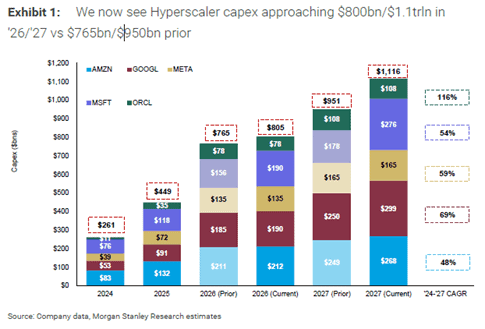

This is also visible in the capex trajectory, the four largest hyperscalers lifted 2026 capex guidance by roughly USD 80bn in aggregate, about 12% higher than forecasts only 3 months ago. Capex for 2026 is now expected to be almost 80% (USD 356bn) higher than in 2025, implying that roughly 1% of US GDP, or close to half the expected GDP growth in 2026, could come from the investments of just four companies.

Similarly for 2027, aggregate capex forecasts for the five hyperscalers have been revised from USD 951bn to USD 1.1tn, close to 3.5% of US GDP. For now, this more than offsets the estimated 0.5%-1.0% drag on US GDP from higher oil prices.

It is not only the need for more compute that is driving the capex bonanza. A big part is cost inflation fuelled by tight supply chains and rising competition for scarce resources. No hyperscaler wants to risk falling behind in what is often framed as a “winners-take-all” market. Usage of AI has strongly migrated toward the best models hence if one player slows spending, it risks losing share quickly. This spending flows directly into the physical layer of the AI economy, where supply chains are slower to respond, lead times are longer and pricing power is often better than in the software layer. This is why we continue to believe that the power and infrastructure enablers remain one of the more attractive ways to gain exposure to the AI investment cycle.

While we are genuinely positive on AI and expect a significant productivity boost, there are several paradoxes.

First, if the hyperscalers are genuinely capacity constrained as demand for compute and tokens are significantly higher than supply, why are prices not rising more aggressively? Most heavy users of AI would agree that the benefits far outweigh the cost, suggesting tokens are currently mispriced.

Management teams might argue it is “too early”, with everyone still in land‑grab mode and AI losses subsidised by legacy profit pools. However, part of the reluctance to raise prices may also reflect intense competition and the fact that switching costs for many AI customers are, for now at least, relatively low.

Second, many argue that hyperscalers are racing toward Artificial General Intelligence (AGI), where the “winner takes all” as self‑improvement loops drive accelerating model performance that competitors cannot match. If that is even partly true, what does that imply for the returns on capital invested by the runners‑up? There might be room for more than one large model, and sub‑AGI systems can still earn attractive returns if they successfully lock customers into their ecosystems. But given the extraordinary scale of investment, the risk of sub‑par returns at the platform level is not trivial.

Third, at a macro level the question is whether AI will generate enough incremental productivity to justify the capital deployed, or whether a large share of the revenue will simply be redistributed from other parts of the economy? Is this a genuine productivity boom, or largely a zero-sum redistribution? We can make arguments for both sides but lean toward considerable productivity gains on a societal level, but with high risk of rising inequality as capital likely takes an even larger share of total income. On the other hand, as multiple credible competitors build similar capabilities and durable competitive advantages remain elusive, we could end up with an outcome similar to past investment booms, where much of the productivity gain is ultimately socialised rather than captured by shareholders.

We are clearly living through an unusual moment, and it will take years before we know how these tensions ultimately resolve. What does seem evident today is that the AI power and infrastructure investment boom is even stronger and more durable than we assumed for most of last year and into this year. There are physical limits to how long AI capex can grow at 70% per year, or even 30–50%, but the set‑up for companies supplying AI power and infrastructure continues to improve as the cycle extends and the bottlenecks become increasingly physical rather than digital.

In that sense, the key point is not the exact capex number for any given year, but the direction of travel. Estimates for AI-related infrastructure spending keep rising, especially into 2027. The arms race is still accelerating, and for our universe the binding constraint remains unchanged: the world can write software faster than it can build the power systems, grids and data centre infrastructure needed to run it.

- Portföljförvaltare och grundare av fonden Coeli Energy Opportunities.

- Mer än 15 års erfarenhet av investeringar från både publika och private equity-sidan.

- Förvaltade fonden Coeli Energy Transition under perioden 2019 - 2023.

- Spenderade sex år på Horizon Asset i London, en marknadsneutral hedgefond.

- Började arbeta tillsammans med Vidar Kalvoy 2012.

- Fem år inom Private Equity på Morgan Stanley.

- Startade sin investeringskarriär inom tekniksektorn på Sweden Robur i Stockholm 2006.

- Utbildad Civilingenjör från Kungliga Tekniska Högskolan.

- Portföljförvaltare och grundare av Coeli Energy Opportunities-fonden.

- Förvaltat aktier inom energisektorn sedan 2006 och har mer än 20 års erfarenhet från portföljförvaltning och aktieanalys.

- Förvaltade fonden Coeli Energy Transition under perioden 2019 - 2023.

- Ansvarig för energiinvesteringarna på Horizon Asset i London under 9 år, en marknadsneutral hedgefond.

- Erfarenhet från energiinvesteringar på MKM Longboat i London och aktieanalys inom teknologisektorn i Frankfurt och Oslo.

- MBA från IESE i Barcelona och Civilekonom från Norges Handelshögskola.

- Innan han började arbeta inom finans var han löjtnant i norska marinen.

IMPORTANT INFORMATION. This is a marketing communication.

Before making any final investment decisions, please refer to the prospectus of Coeli SICAV II, its Annual Report, and the KID of the relevant Sub-Fund. Relevant information documents are available in English at coeli.com. A summary of investor rights will be available at https://coeli.com/financial-and-legal-information/. Past performance is not a guarantee of future returns. The price of the investment may go up or down and an investor may not get back the amount originally invested. Please note that the management company of the fund may decide to terminate the arrangements made for the marketing of the fund in one or multiple jurisdictions in which there exists arrangements for marketing.